Tame the Stream: Handling Backpressure in Node.js Like a Pro

Why your fast data streams could crash your app—and how to fix it elegantly with proper backpressure handling

Principal Technical Consultant at GeekyAnts.

Bootstrapping our own Data Centre services.

I lead the development and management of innovative software products and frameworks at GeekyAnts, leveraging a wide range of technologies including OpenStack, Postgres, MySQL, GraphQL, Docker, Redis, API Gateway, Dapr, NodeJS, NextJS, and Laravel (PHP).

With over 9 years of hands-on experience, I specialize in agile software development, CI/CD implementation, security, scaling, design, architecture, and cloud infrastructure. My expertise extends to Metal as a Service (MaaS), Unattended OS Installation, OpenStack Cloud, Data Centre Automation & Management, and proficiency in utilizing tools like OpenNebula, Firecracker, FirecrackerContainerD, Qemu, and OpenVSwitch.

I guide and mentor a team of engineers, ensuring we meet our goals while fostering strong relationships with internal and external stakeholders. I contribute to various open-source projects on GitHub and share industry and technology insights on my blog at blog.faizahmed.in.

I hold an Engineer's Degree in Computer Science and Engineering from Raj Kumar Goel Engineering College and have multiple relevant certifications showcased on my LinkedIn skill badges.

Streaming Isn’t Always Smooth!

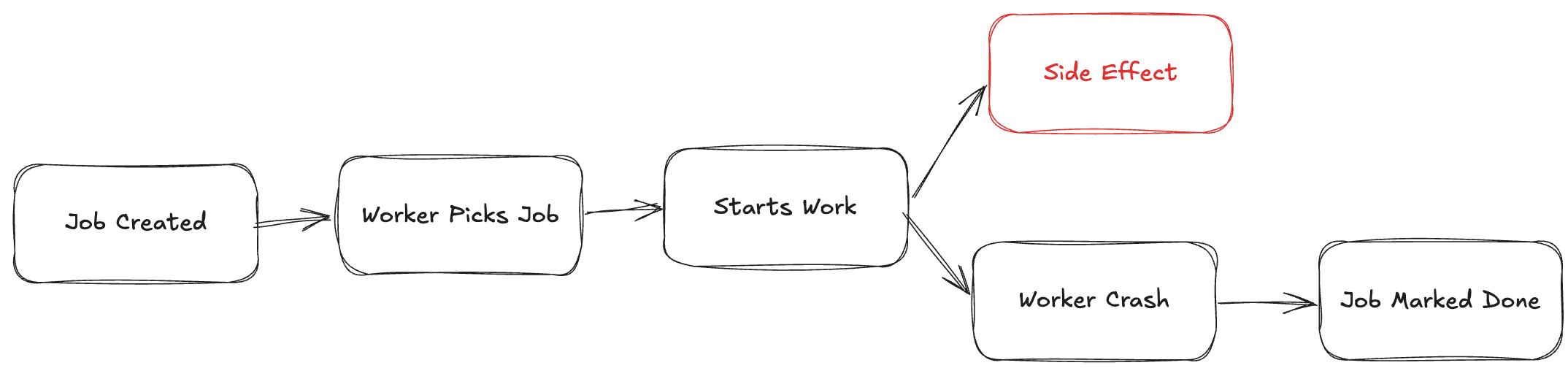

Node.js Streams are a great way to handle large data without loading everything into memory.

But without managing flow properly, you can flood your memory and crash the app.

Enter: Backpressure!

What is Backpressure?

Imagine a water hose — if water flows faster than the outlet can handle, it overflows.

In Node.js:

Producer = Source pushing data (e.g., File Read Stream)

Consumer = Destination handling data (e.g., Writing to DB, HTTP Response)

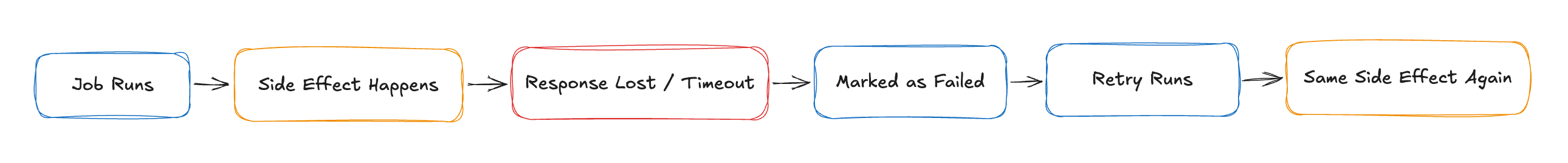

Backpressure happens when the consumer is slower than the producer.

How Streams Normally Work (Without Proper Handling)

Example scenario:

const { createReadStream, createWriteStream } = require('fs');

const readable = fs.createReadStream('largeFile.txt');

const writable = fs.createWriteStream('destination.txt');

readable.pipe(writable);

pipeautomatically handles backpressure.

Problem: If manually handling .on('data'), you can break things.

Bad Manual Handling Example:

readable.on('data', (chunk) => {

writable.write(chunk); // 🚨 No control if writable buffer fills up!

});

If

writable.write()returnsfalse, you're supposed to pause the readable stream.If you don't, you risk memory overflow!

The Right Way: Managing Backpressure Yourself

Correct approach:

readable.on('data', (chunk) => {

if (!writable.write(chunk)) {

readable.pause(); // ✋ Pause reading if writable is overwhelmed

}

});

writable.on('drain', () => {

readable.resume(); // ✅ Resume once writable buffer is free

});

Explanation:

.write(chunk)returnsfalseif internal buffer is full..pause()and.resume()control the flow to prevent memory pressure.

When You Get Backpressure For Free: pipe()

The .pipe() method internally manages backpressure.

Best practice: Use .pipe() whenever possible unless you have special needs.

Example:

readable.pipe(writable);

- Safe, memory-friendly, easy

Real-World Example: Uploading Files to S3

Imagine uploading large video files:

Read file as a stream.

Upload to S3 as a writable stream.

Must handle backpressure or your server dies under heavy load.

Code Sketch:

const { createReadStream } = require('fs');

const { Upload } = require('@aws-sdk/lib-storage');

async function uploadLargeFile(filePath, bucket, key) {

const fileStream = createReadStream(filePath);

const upload = new Upload({

client: s3Client,

params: {

Bucket: bucket,

Key: key,

Body: fileStream,

},

});

await upload.done();

}

- AWS's SDK internally handles backpressure too.

When Ignoring Backpressure Becomes a Disaster 🚨

High memory consumption (visible in

htop,pm2, etc.)Random server crashes

Slow I/O performance

Increased GC pauses

Bonus Tip: HighWaterMark Settings

- Streams have internal buffer thresholds.

- You can configure the size using

highWaterMark.

Example:

const readable = fs.createReadStream('largeFile.txt', { highWaterMark: 16 * 1024 }); // 16 KB buffer

- Tune it based on your system and use case.

Conclusion: Stream Smart, Stream Safe!

Node.js Streams are a superpower.

But without respecting backpressure, your app can get wrecked.

Understand .write(), .pause(), .resume(), and always monitor memory for large transfers.

✅ Small effort.

✅ Huge stability boost.

📢 Call to Action:

"Have you ever had a server crash because of uncontrolled streams? Share your war stories or tips below! 🚀"