Stop Using JSON.parse on Huge Payloads: Streaming JSON in Node.js

Let's handle massive JSON data in Node.js using streams instead of blocking your server with JSON.parse()

Principal Technical Consultant at GeekyAnts.

Bootstrapping our own Data Centre services.

I lead the development and management of innovative software products and frameworks at GeekyAnts, leveraging a wide range of technologies including OpenStack, Postgres, MySQL, GraphQL, Docker, Redis, API Gateway, Dapr, NodeJS, NextJS, and Laravel (PHP).

With over 9 years of hands-on experience, I specialize in agile software development, CI/CD implementation, security, scaling, design, architecture, and cloud infrastructure. My expertise extends to Metal as a Service (MaaS), Unattended OS Installation, OpenStack Cloud, Data Centre Automation & Management, and proficiency in utilizing tools like OpenNebula, Firecracker, FirecrackerContainerD, Qemu, and OpenVSwitch.

I guide and mentor a team of engineers, ensuring we meet our goals while fostering strong relationships with internal and external stakeholders. I contribute to various open-source projects on GitHub and share industry and technology insights on my blog at blog.faizahmed.in.

I hold an Engineer's Degree in Computer Science and Engineering from Raj Kumar Goel Engineering College and have multiple relevant certifications showcased on my LinkedIn skill badges.

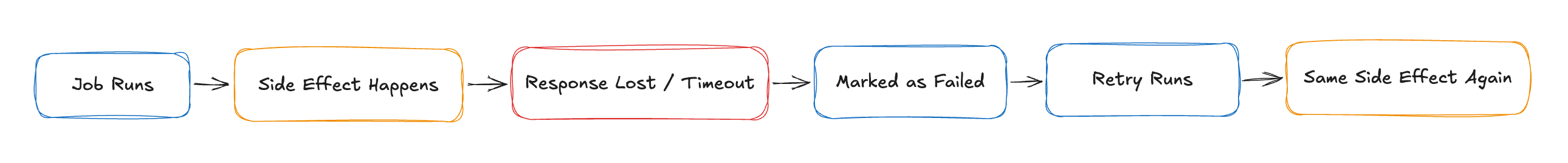

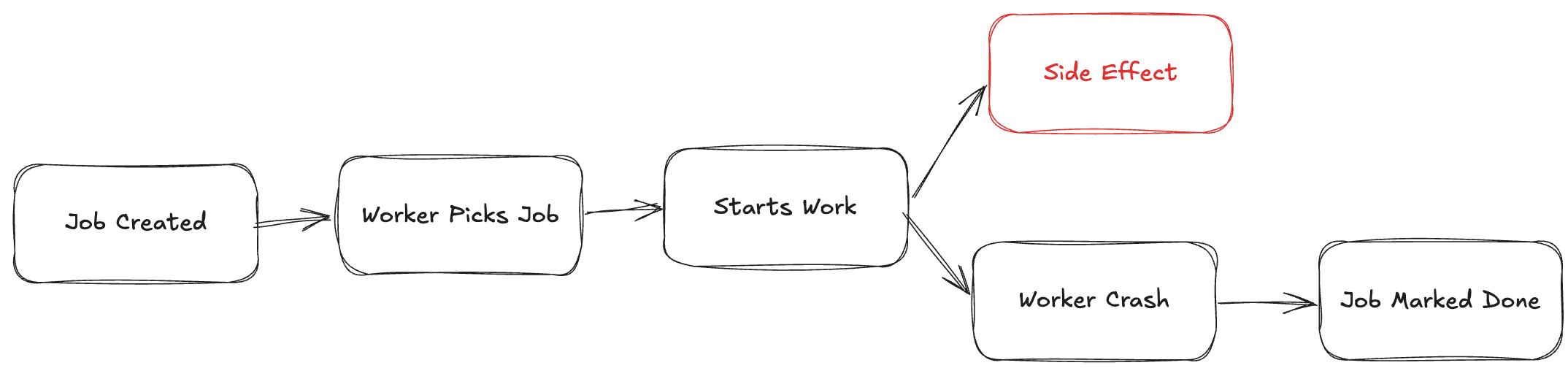

Think JSON.parse() is harmless? Think again.

When you're dealing with massive logs, analytics dumps, or API exports, that innocent line of code can quietly bring your Node.js app to its knees — eating up memory, blocking the event loop, and even crashing your server.

In this post, I'll show you the smarter, scalable way to handle huge JSON payloads using streaming parsers that process data piece by piece — no memory explosions, no drama.

🚨 Introduction: The Hidden Danger of JSON.parse()

Everyone uses

JSON.parse(), but it loads the entire payload into memory before parsing.This is fine for small payloads, but deadly for:

Logs

API exports

Analytics events

Large nested objects

Result?

High memory usage

Long GC pauses

App crashes or OOM (Out of Memory) errors

🔍 Why JSON.parse() Fails at Scale

const data = fs.readFileSync('huge.json');

const parsed = JSON.parse(data); // 💣 if file is >200MB+

Memory spikes because the entire buffer is loaded before parsing

Node.js event loop blocked → server becomes unresponsive

💡 The Solution: Streaming JSON Parsing

Meet the heroes:

JSONStreamoboe.js(browser + Node)

They allow:

Chunked reading of JSON

Event-based parsing of deeply nested objects

Low memory footprint

🛠️ Example with stream-json

📁 Sample: huge.json

{

"users": [

{ "id": 1, "name": "Alice" },

{ "id": 2, "name": "Bob" },

...

]

}

✅ Stream it:

const fs = require('fs');

const { parser } = require('stream-json');

const { streamArray } = require('stream-json/streamers/StreamArray');

const stream = fs.createReadStream('huge.json')

.pipe(parser())

.pipe(streamArray());

stream.on('data', ({ value }) => {

console.log('User:', value);

});

stream.on('end', () => {

console.log('Done processing large JSON.');

});

🎯 Result:

Processes one item at a time

Uses minimal memory

Ideal for ETL, analytics, or data migration scripts

📉 Performance Benchmark

| Method | Memory Used | Time Taken |

JSON.parse() | 500MB+ | 8s |

stream-json | ~50MB | 9s |

✅ Slightly slower, but way safer and much more scalable

🧠 When to Use Streaming JSON Parsing

Use streaming if:

File or payload size > 10MB

You’re only processing parts of the JSON

You're building:

Log processors

Data transformers

API data sync tools

🚫 Gotchas & Best Practices

Streaming JSON needs well-formed JSON (no trailing commas, etc.)

Use libraries like

stream-jsonwith known structure (e.g., arrays or object roots)You can combine

stream-jsonwithTransformstreams for batch processing

✅ Conclusion: Stream Don’t Crash

If you’re working with large JSON files, stop loading them all at once.

Use stream-based parsing for safer, cleaner, and more scalable Node.js apps.

📣 Call to Action:

"Have you ever crashed a Node app because of a giant JSON? Let’s hear your horror stories—or your fixes—in the comments. 😅"